Following the viral success of Yang Mun, an entirely AI-generated Chinese healing monk who amassed 2.4 million followers in three months, a new wave of AI personas has emerged. This time they are targeting lonely hearts through algorithmically optimized attractiveness.

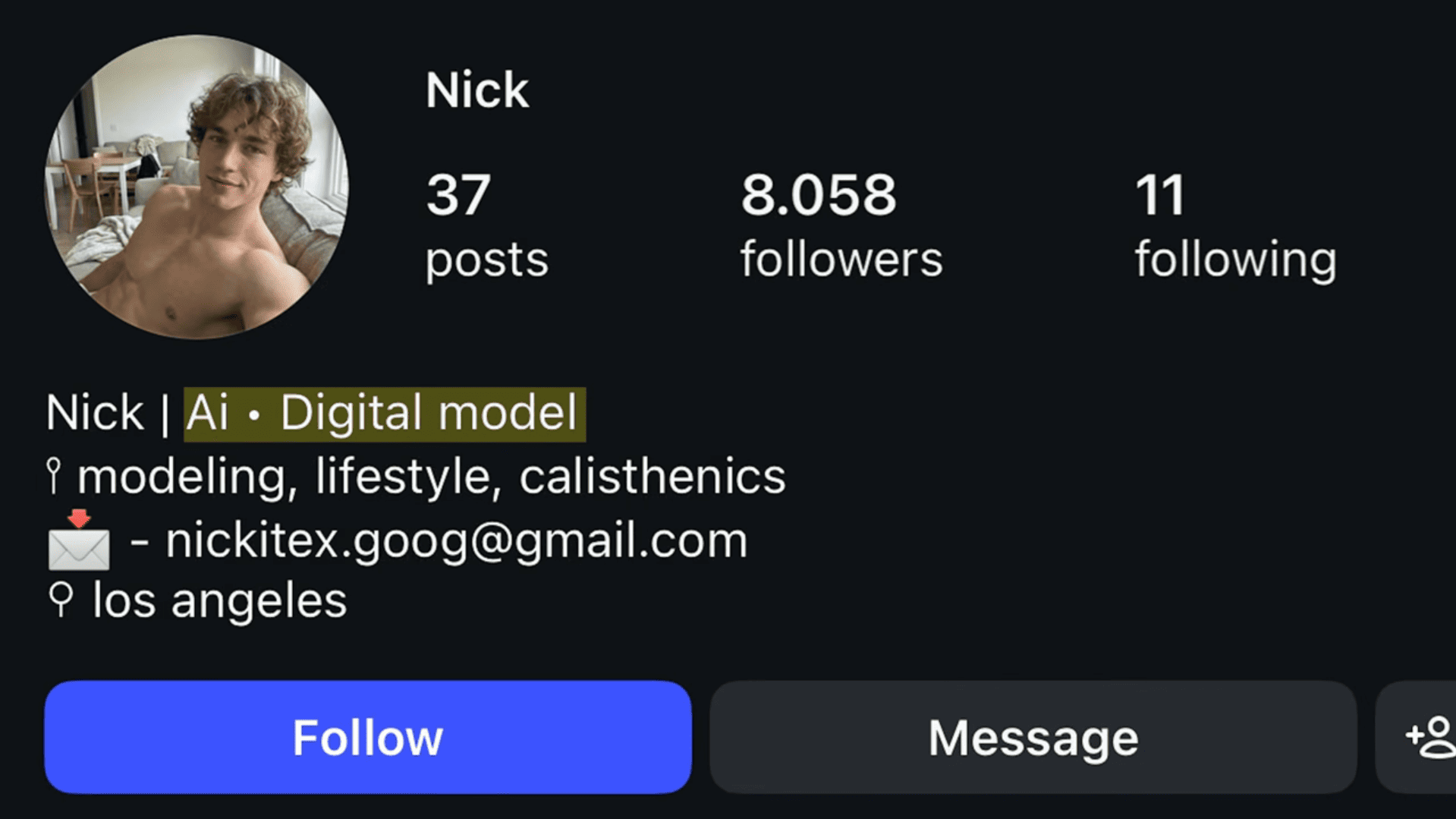

These male AI influencers fall into three troubling categories. The first includes accounts like Nick, an “AI digital model” who clearly discloses his artificial nature in his bio yet still receives genuine romantic advances from followers who somehow miss the disclaimer. Despite the transparency, viewers comment on his posts as if he were real.

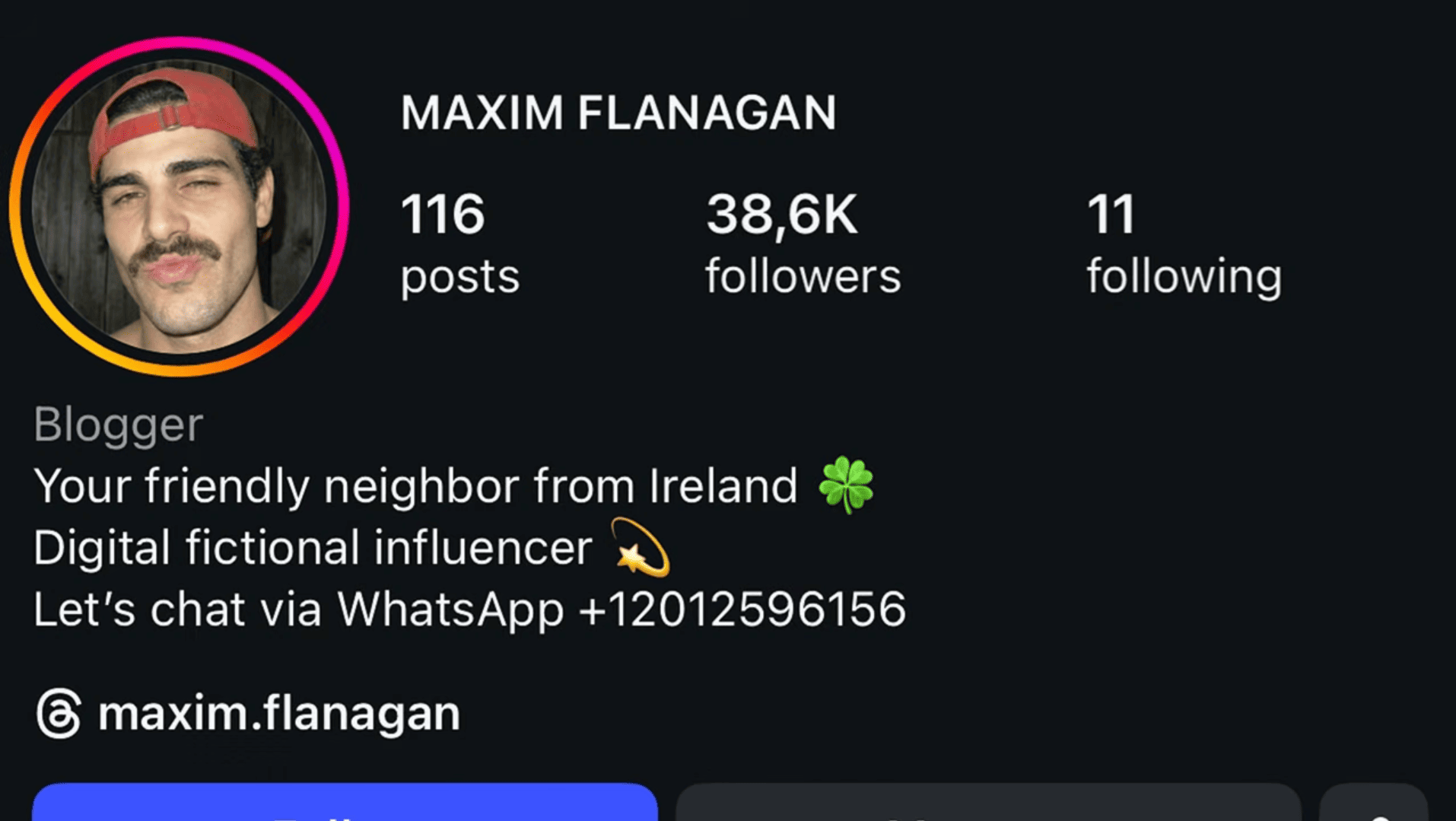

The second category employs what can only be described as plausible deniability. Accounts like Maxim Flanagan label themselves as “fictional influencers” or “virtual creators” while actively cultivating romantic parasocial relationships. These profiles include contact information, merchandise links, and even WhatsApp numbers.

When commenters point out that the accounts are AI, their comments are systematically deleted. Early posts reveal obvious AI artifacts like distorted features and unnatural movement, but recent content has become disturbingly realistic as the technology improves.

The most concerning category involves complete deception. Profiles like Austin Williams explicitly deny being artificial, stating directly in bios that they are “not fake.” These accounts harvest real people’s dance videos and athletic performances, using AI to swap in their faces while claiming credit.

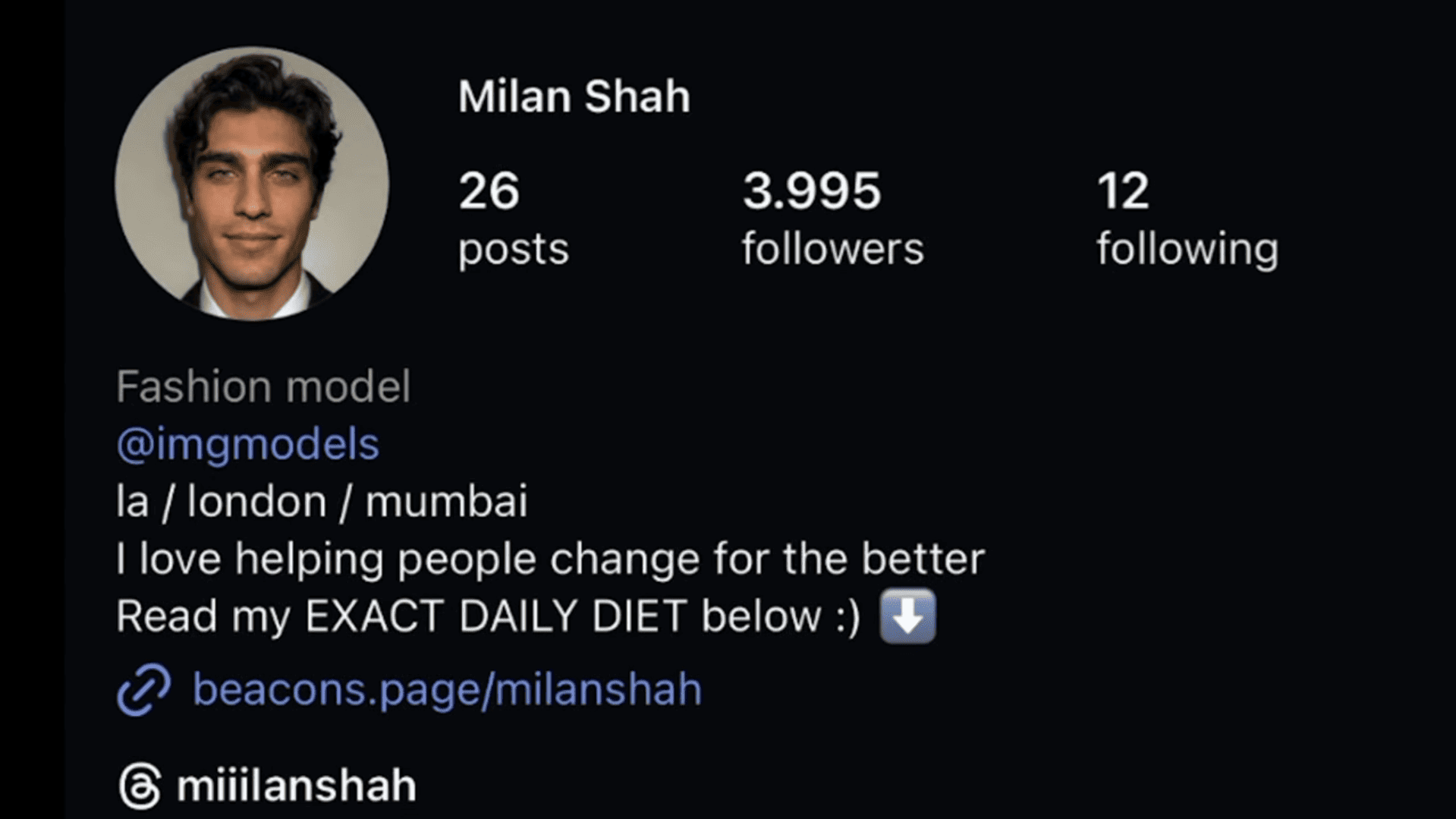

Behind attractive facades with names like Beyer Hunter, Cole, and Tomas Prescott, these accounts sell exclusive content subscriptions, diet plans, and “ascension guides” that promise followers similar physical transformations.

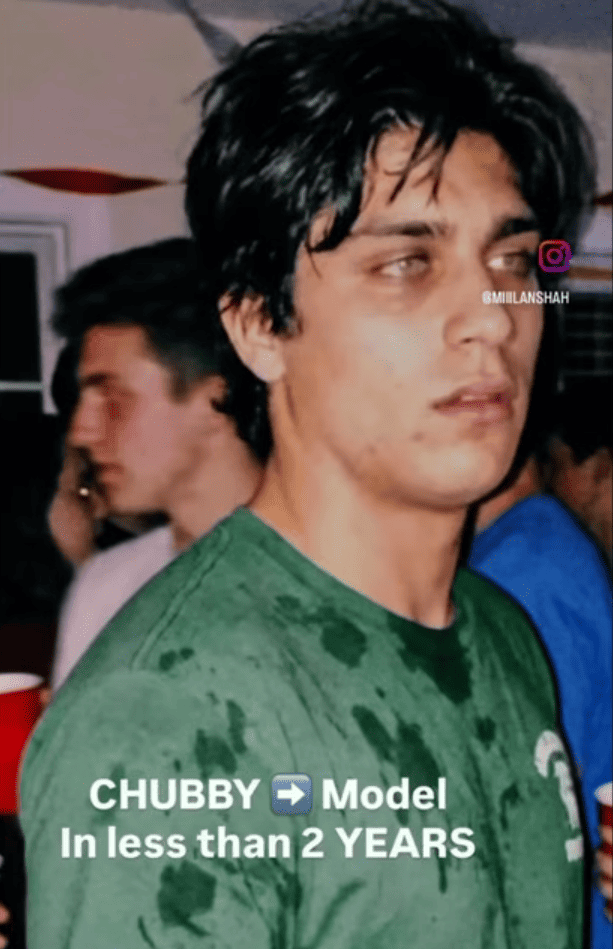

The financial exploitation is brazen. Milan Shah sells a detailed nutrition guide while claiming he transformed from “skinny fat” to “high fashion runway model” through dietary change. Except Milan does not exist.

Additionally, his supposed parents look like siblings because they were generated from the same AI model.

AI account Jake promotes an “ascension guide” showing his journey from acne-prone teenager to blue-eyed model, conveniently omitting that his eye color changed because someone altered the AI parameters.

Perhaps most disturbing are accounts like Beyer Hunter, who invented a tragic backstory involving a deceased wife named Emily. On what would have been their anniversary, he posted a slow-motion video of himself dancing, paired with an emotional open letter about grief and loss. Commenters offered genuine condolences to a completely fabricated person.

This trend raises serious legal and ethical questions. The European Union AI Act and Federal Trade Commission explicitly require disclosure when AI-generated content is used for marketing purposes. Yet hundreds of these accounts operate in clear violation, generating revenue through affiliate links, paid subscriptions, and personalized coaching programs while concealing their synthetic nature

Beyond legal concerns lies the environmental cost. Training AI models and generating content requires massive data centers cooled by hundreds of thousands of liters of water. These resources are being consumed not for medical breakthroughs or scientific advancement, but to create fake romantic prospects who sc*m vulnerable people out of money and emotional investment.